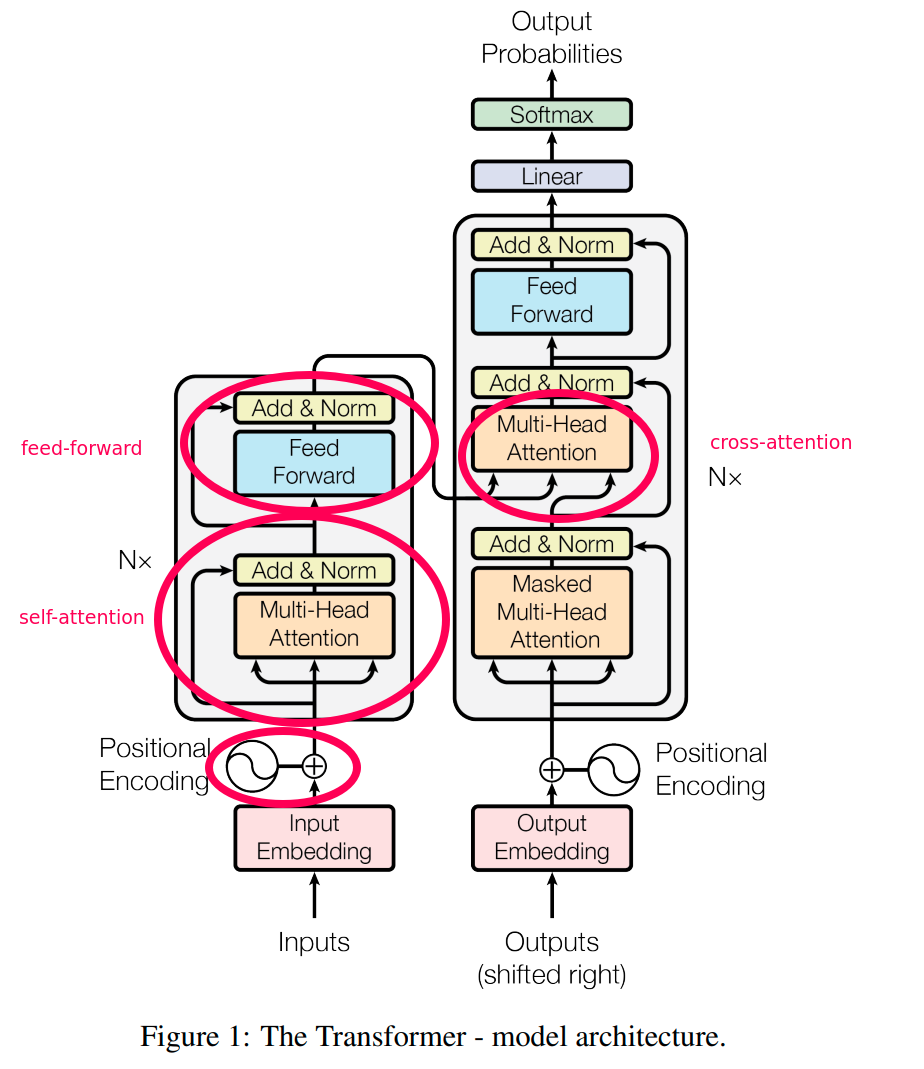

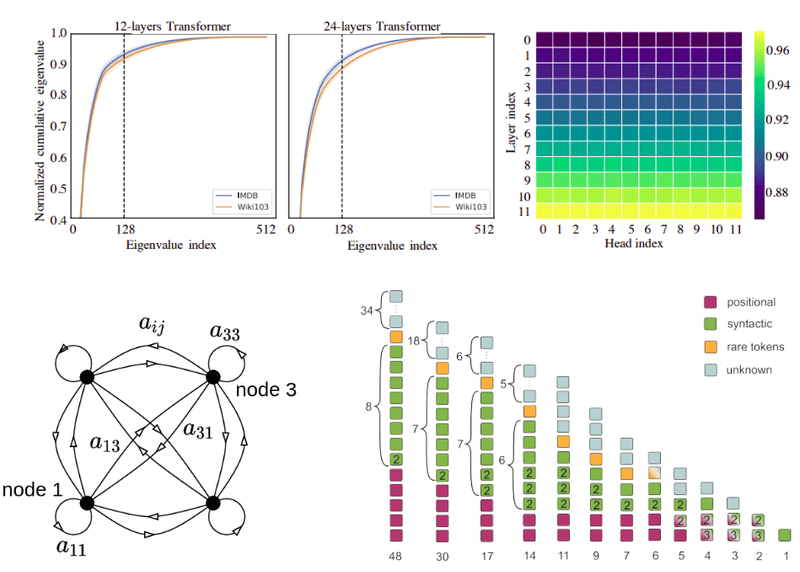

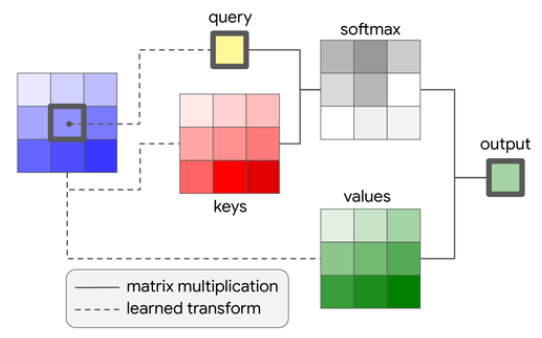

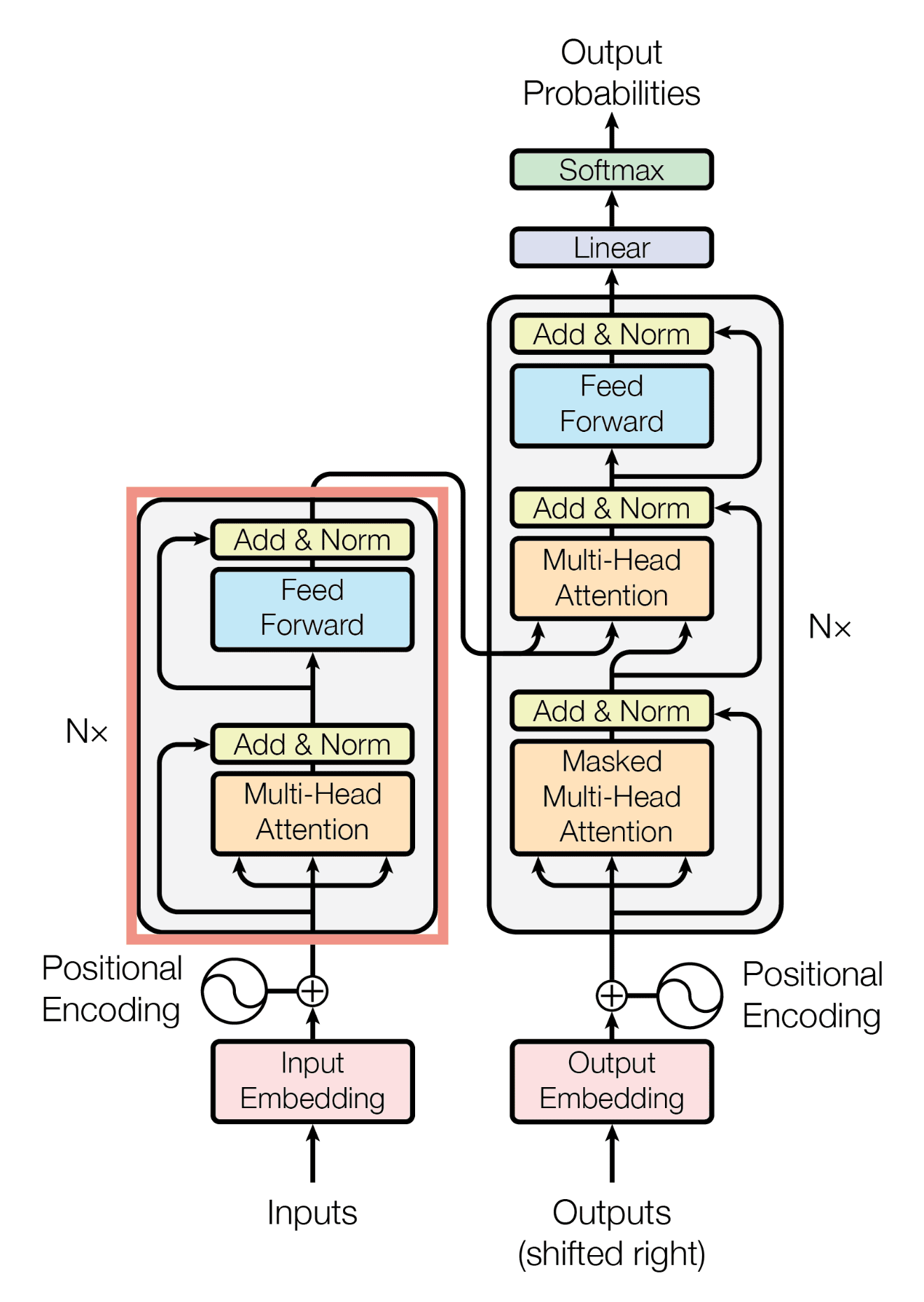

How Attention works in Deep Learning: understanding the attention mechanism in sequence models | AI Summer

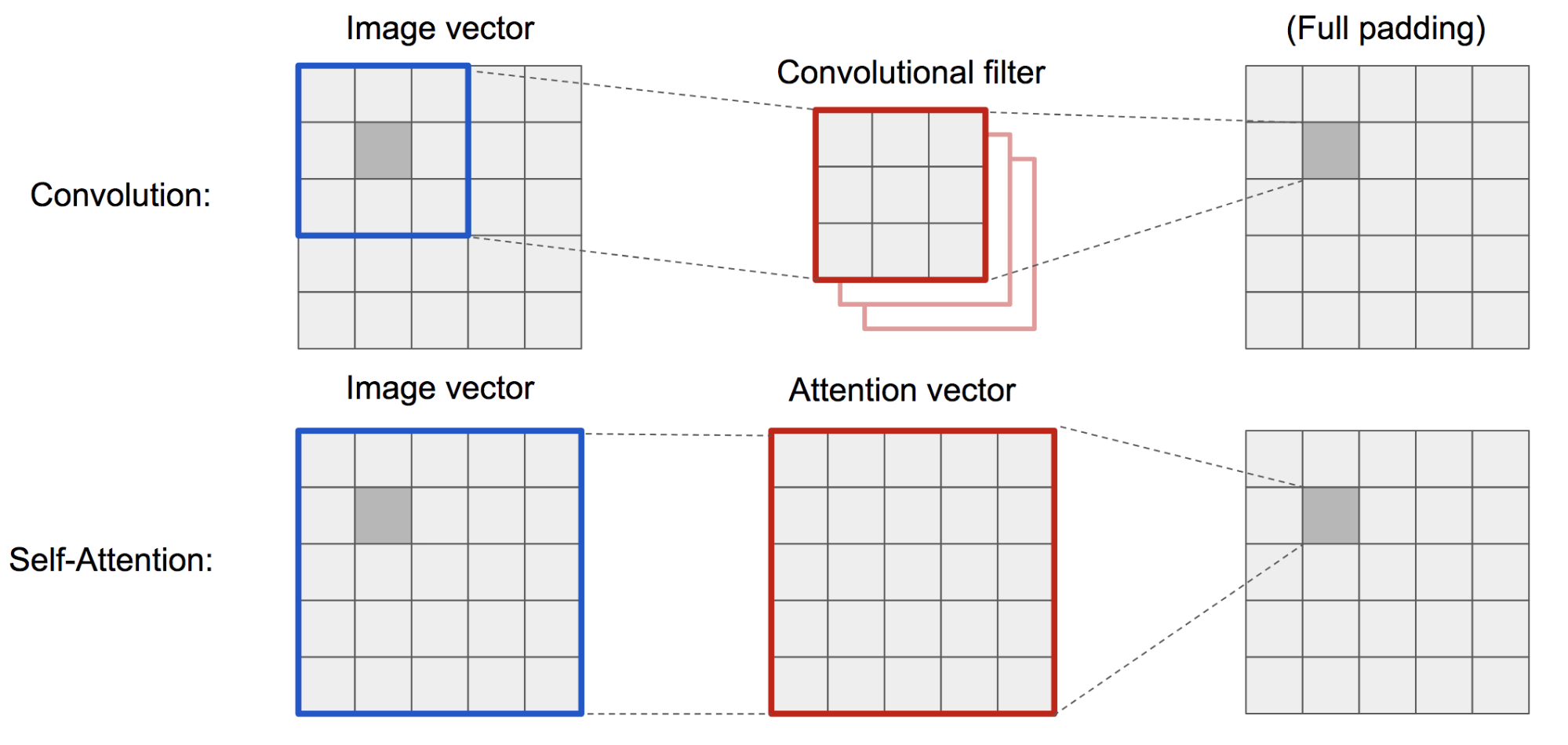

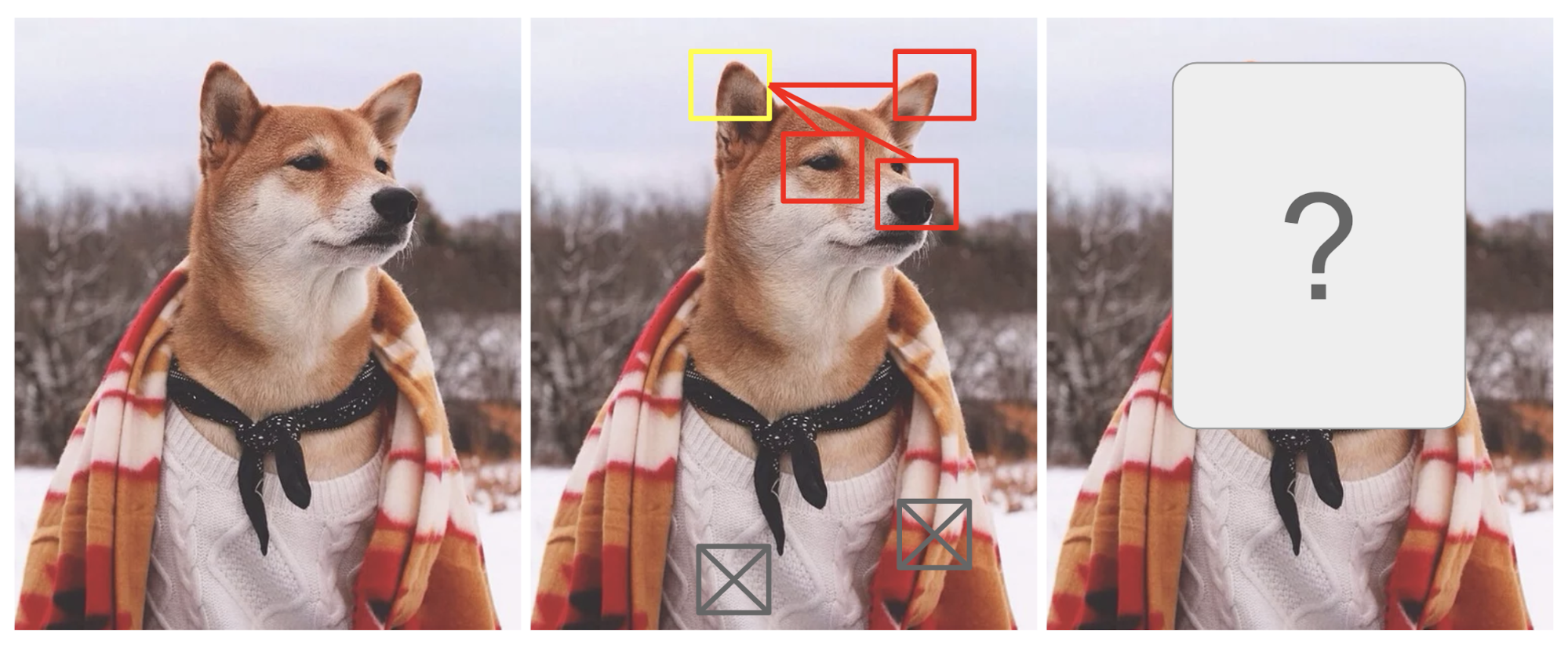

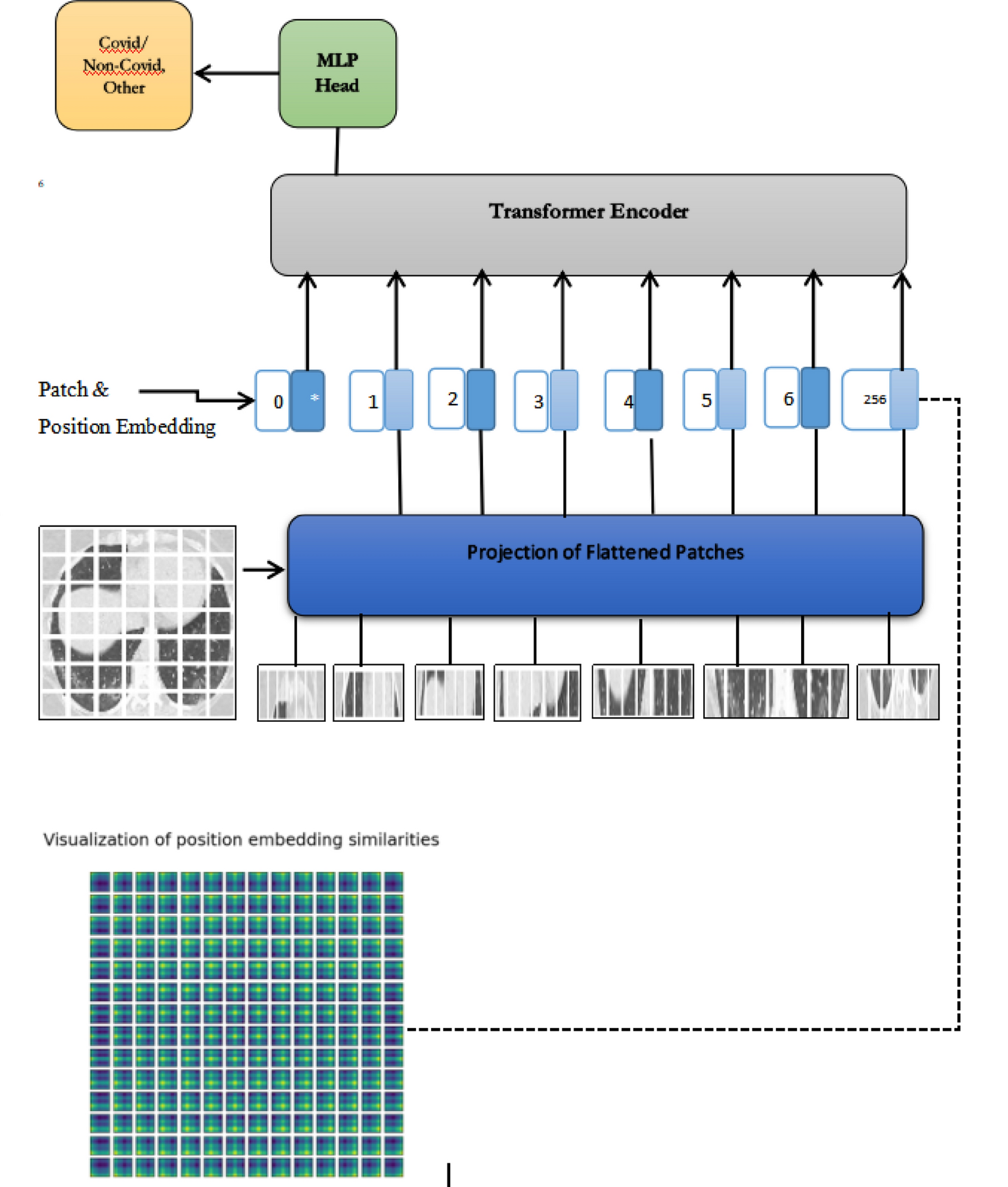

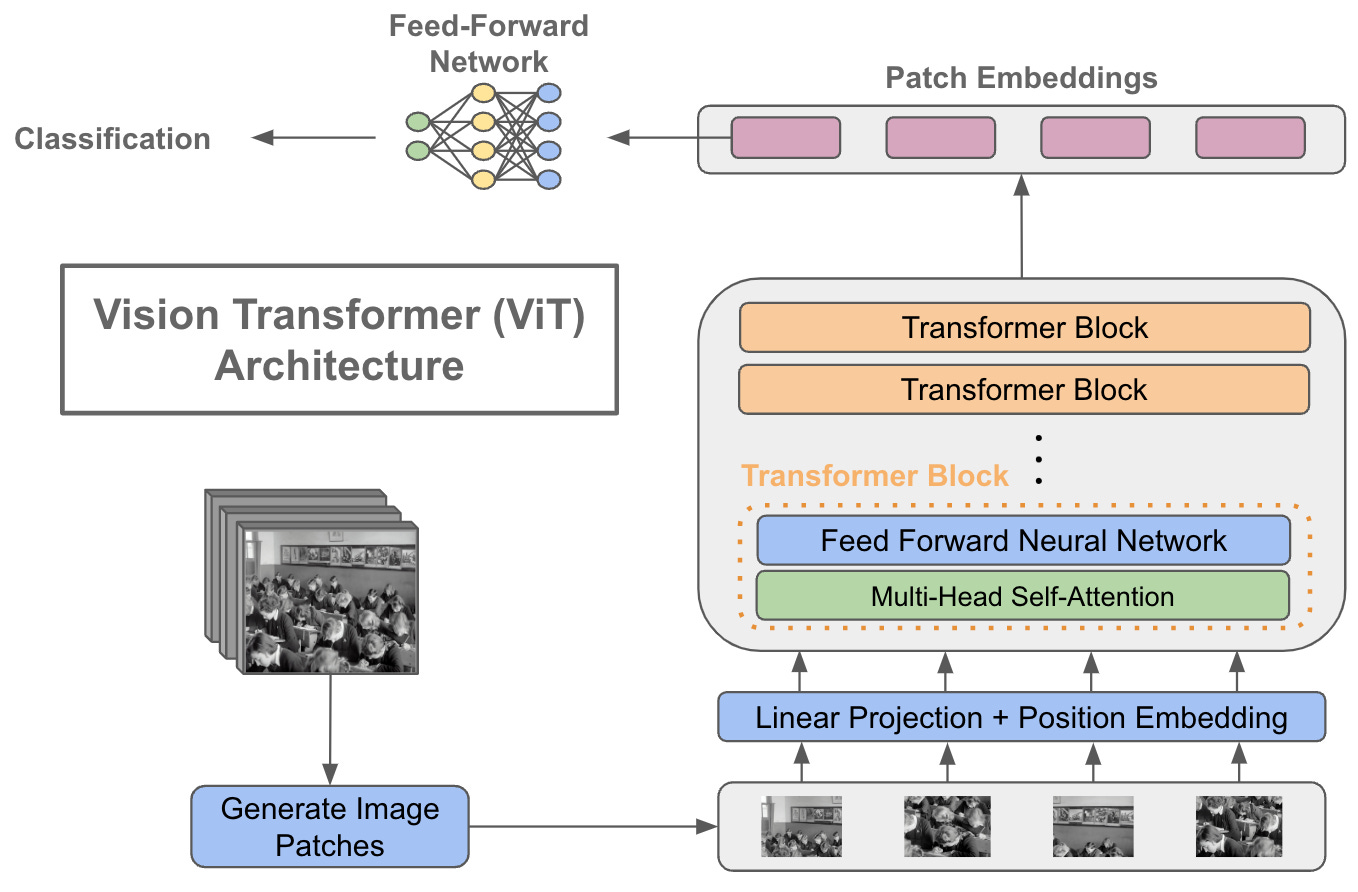

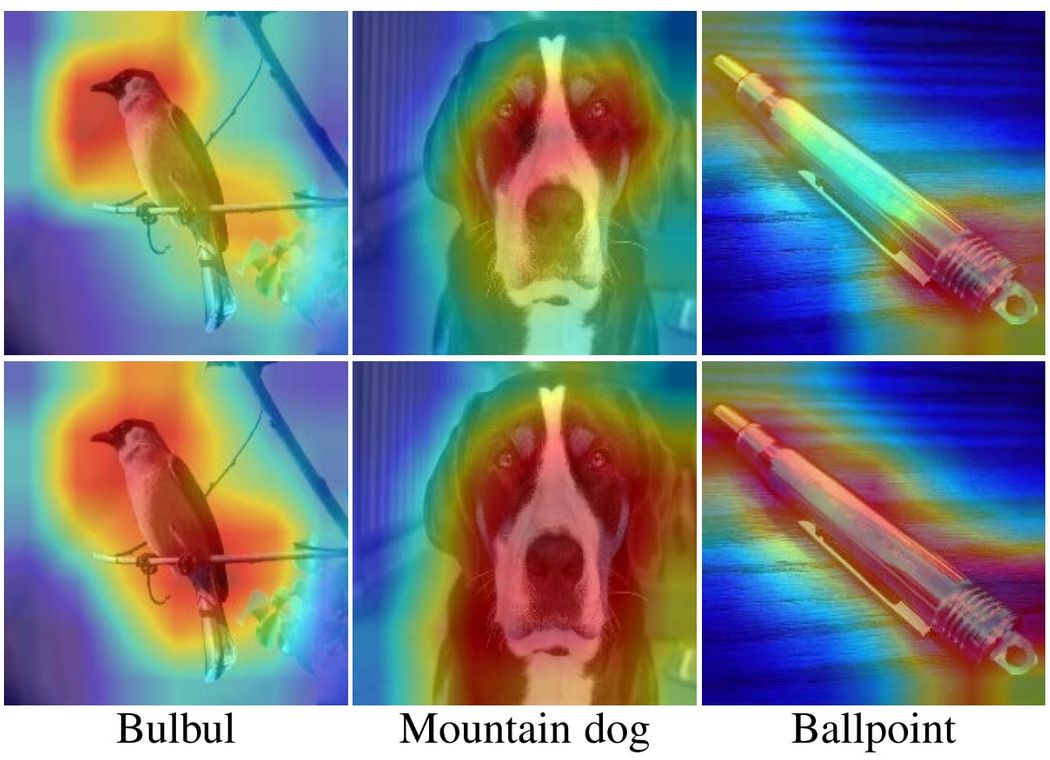

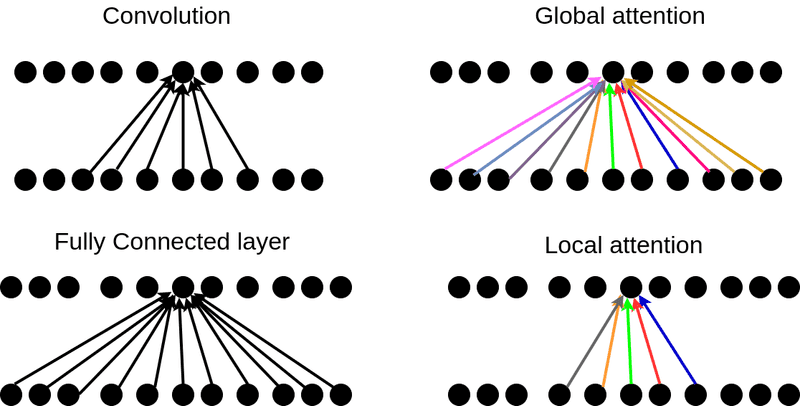

Vision Transformers: Natural Language Processing (NLP) Increases Efficiency and Model Generality | by James Montantes | Becoming Human: Artificial Intelligence Magazine