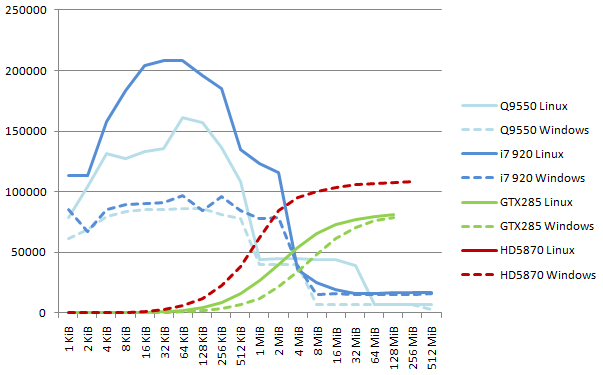

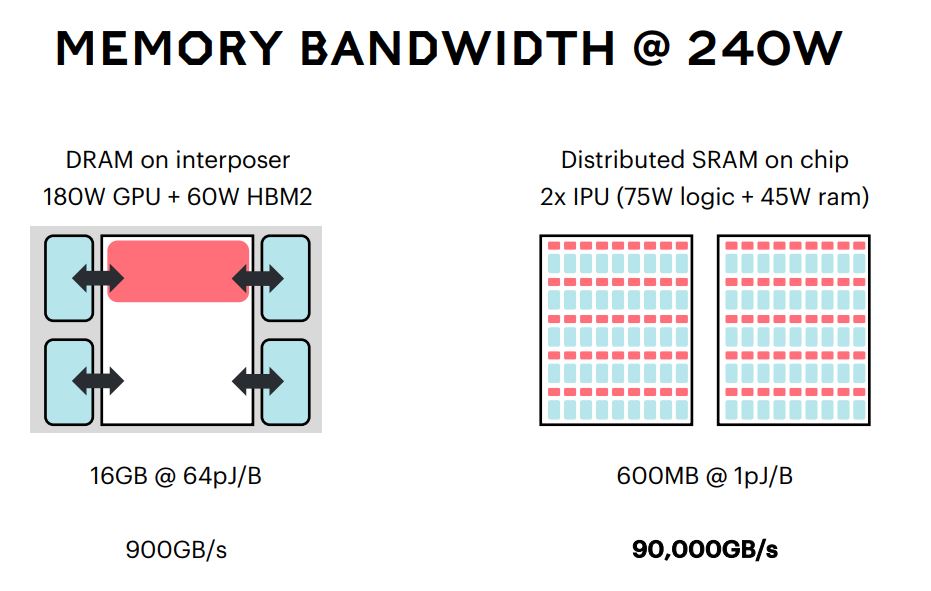

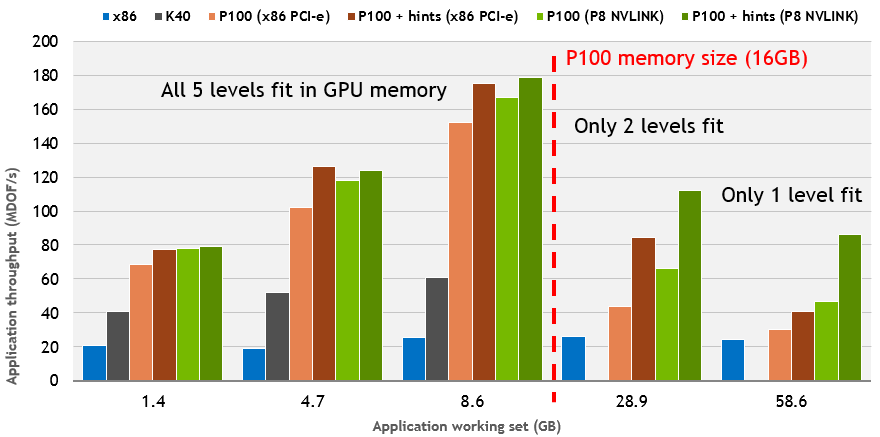

GPUs. An enlarging peak performance advantage: –Calculation: 1 TFLOPS vs. 100 GFLOPS –Memory Bandwidth: GB/s vs GB/s –GPU in every PC and. - ppt download

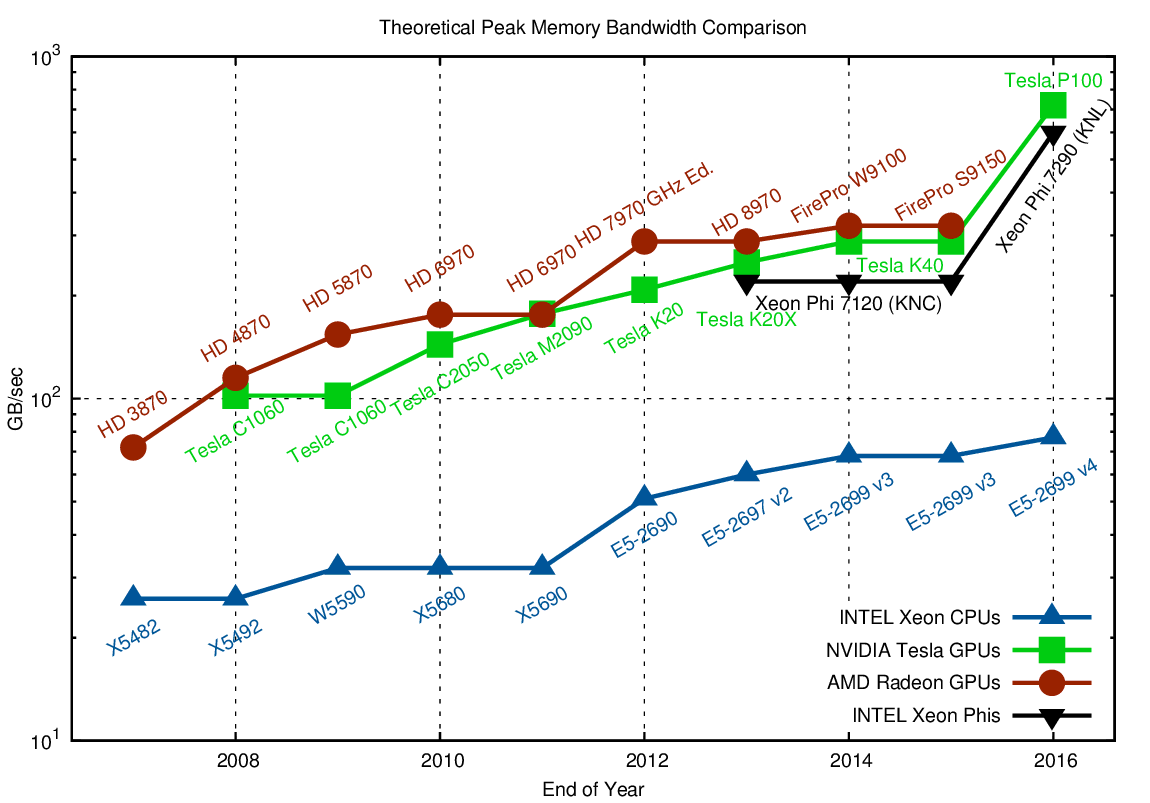

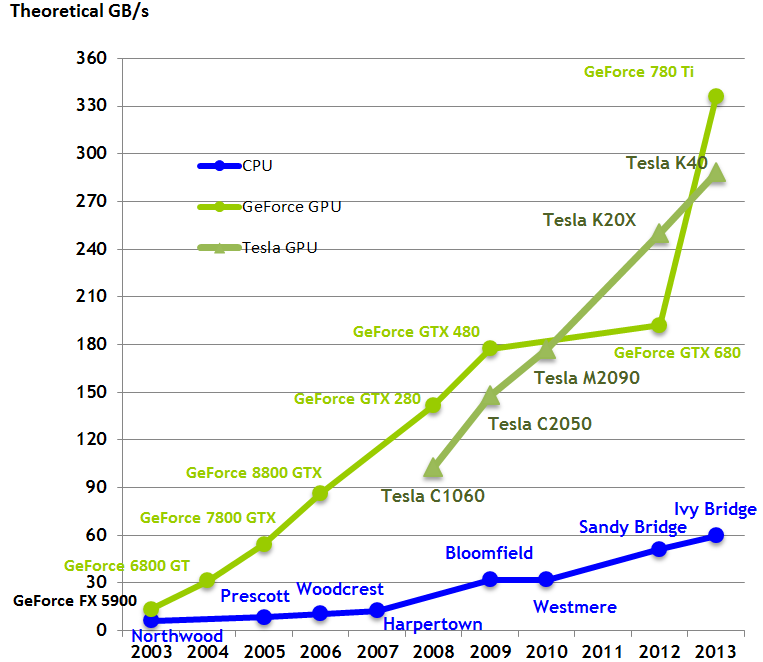

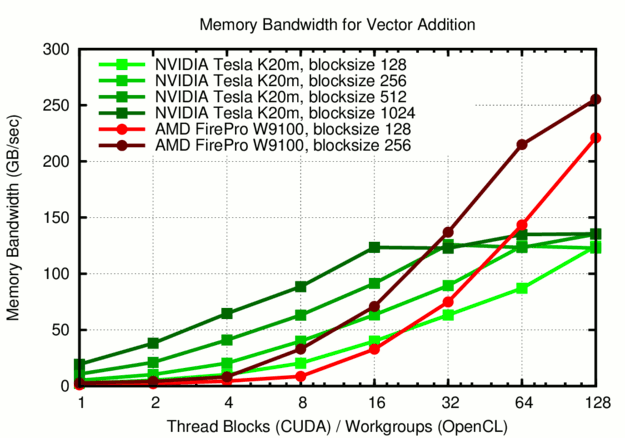

Comparison, how CPU's and GPU's memory bandwidth increased during the... | Download Scientific Diagram

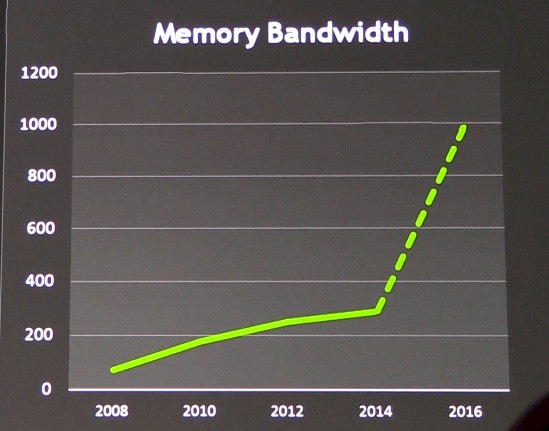

Tutorial: How to calculate GPU memory clock speed and memory bandwidth - GDDR6, GDDR6X, HBM2e etc - YouTube

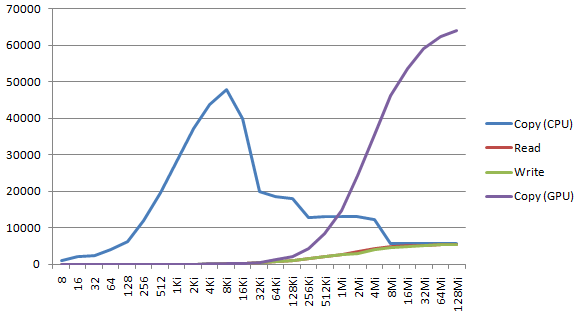

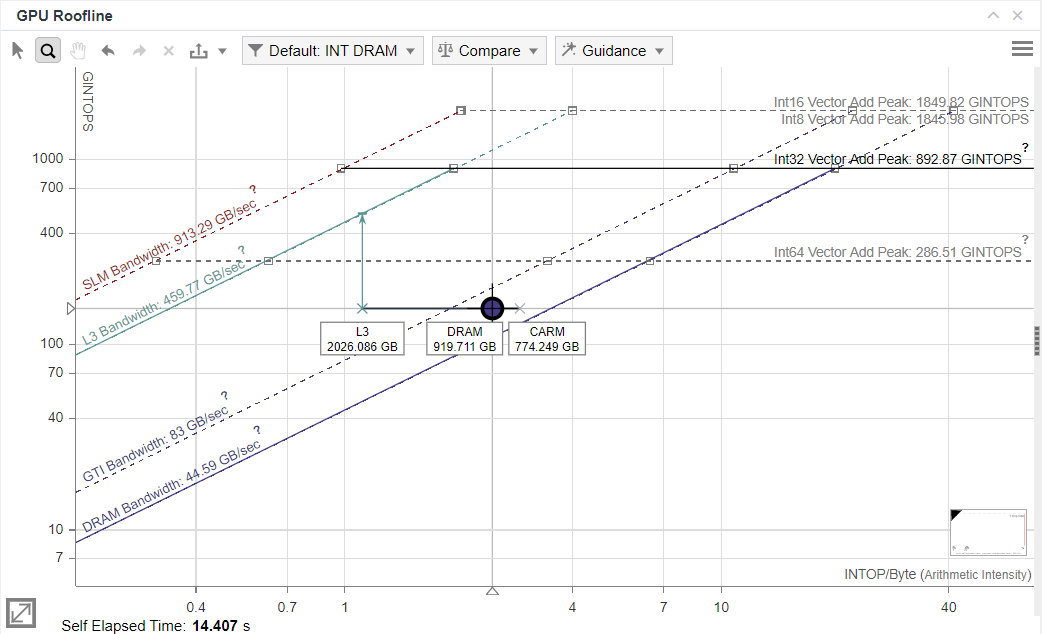

HPC Guru on Twitter: "@NERSC @nvidia #A100 #GPU Memory & tips for memory usage If you are not using lots of threads, you will not get peak memory bandwidth #HPC #AI https://t.co/KJeoo5OlKc" /