Filip Moons on Twitter: "New statistical methodology preprint published! 🔗https://t.co/6QYu7lzje8 👉This paper introduces a new chance-corrected inter-rater reliability measure, allowing several raters to classify each subject into one-or-more ...

Using JMP and R integration to Assess Inter-rater Reliability in Diagnosing Penetrating Abdominal Injuries from MDCT Radiologica

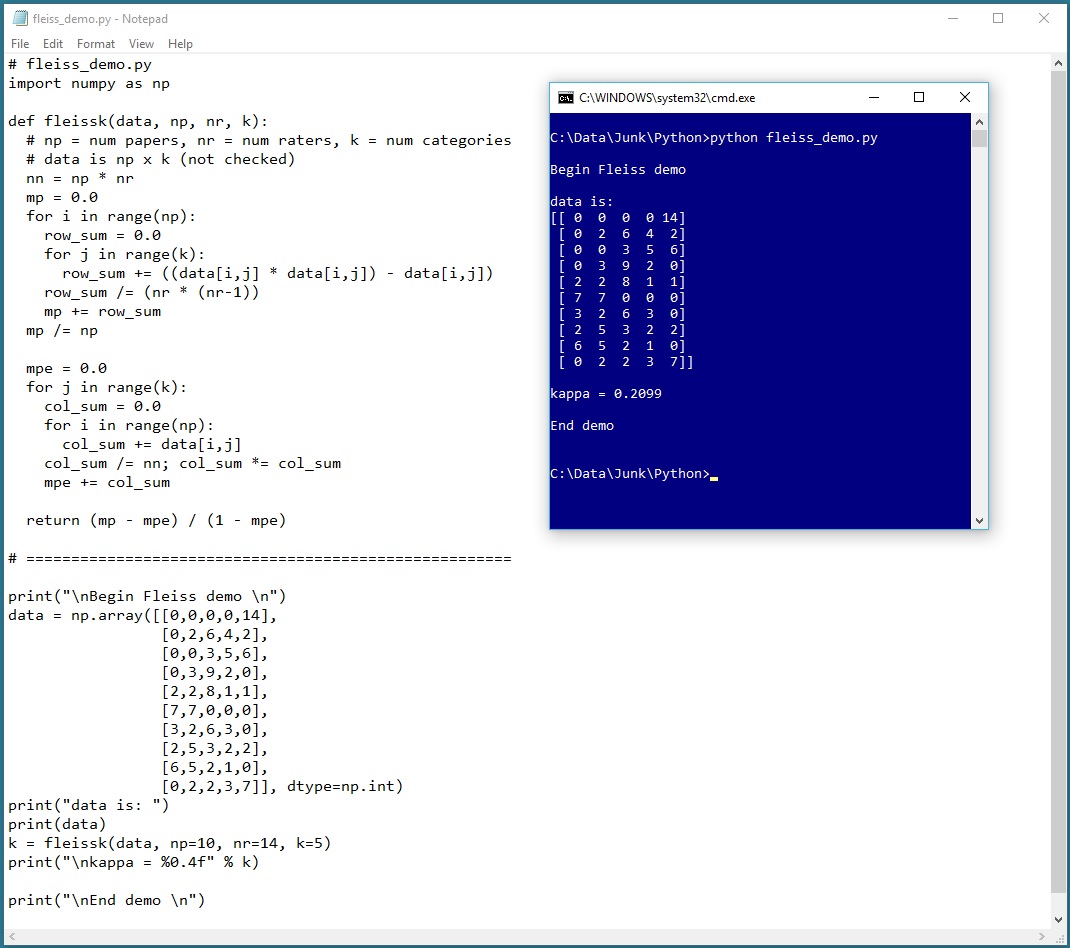

GitHub - Christian-TechUCM/Fleiss-Kappa: Python script that calculates Fleiss Kappa, a statistical measure of inter-rater agreement, on data from an Excel file.