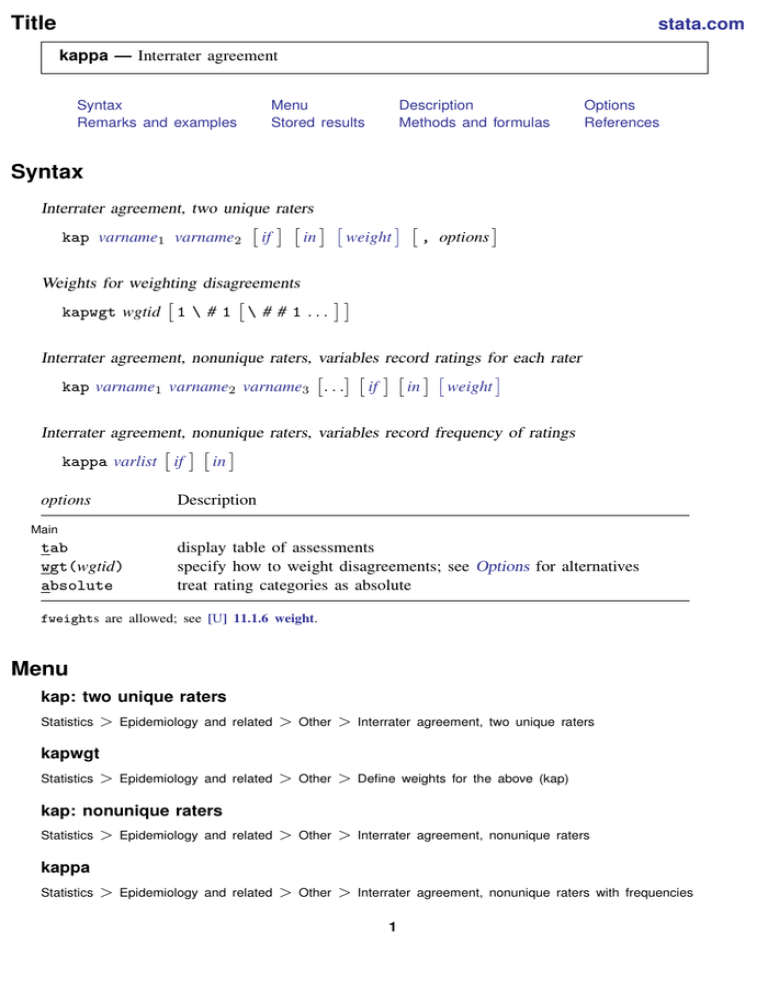

Inter-rater reliability of the BAS MQ, weighted kappa values for the 23... | Download Scientific Diagram

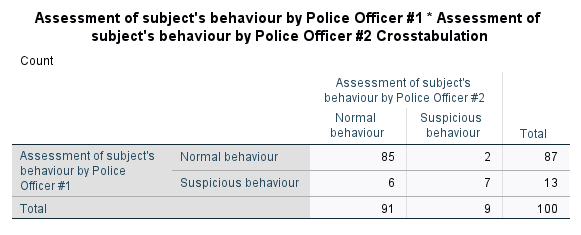

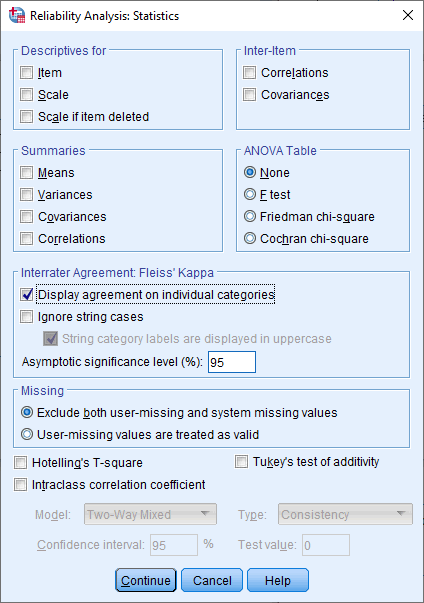

Cohen's kappa in SPSS Statistics - Procedure, output and interpretation of the output using a relevant example | Laerd Statistics

![PDF] Cohen's quadratically weighted kappa is higher than linearly weighted kappa for tridiagonal agreement tables | Semantic Scholar PDF] Cohen's quadratically weighted kappa is higher than linearly weighted kappa for tridiagonal agreement tables | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/5df092de279231383db41edabbc6e93624b302b6/2-Table1-1.png)

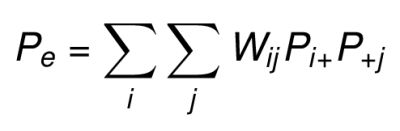

PDF] Cohen's quadratically weighted kappa is higher than linearly weighted kappa for tridiagonal agreement tables | Semantic Scholar

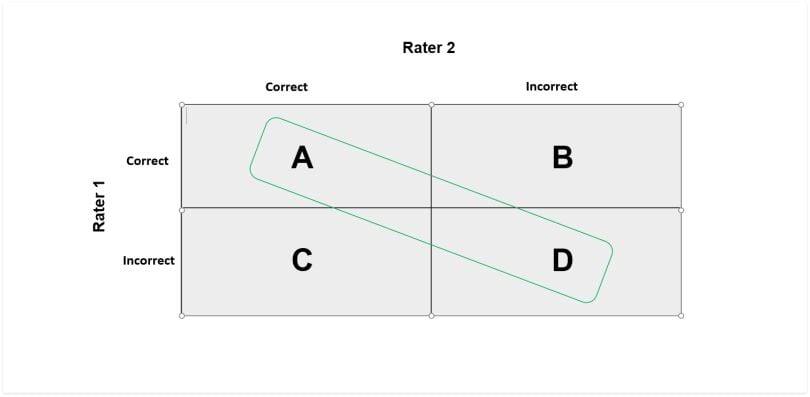

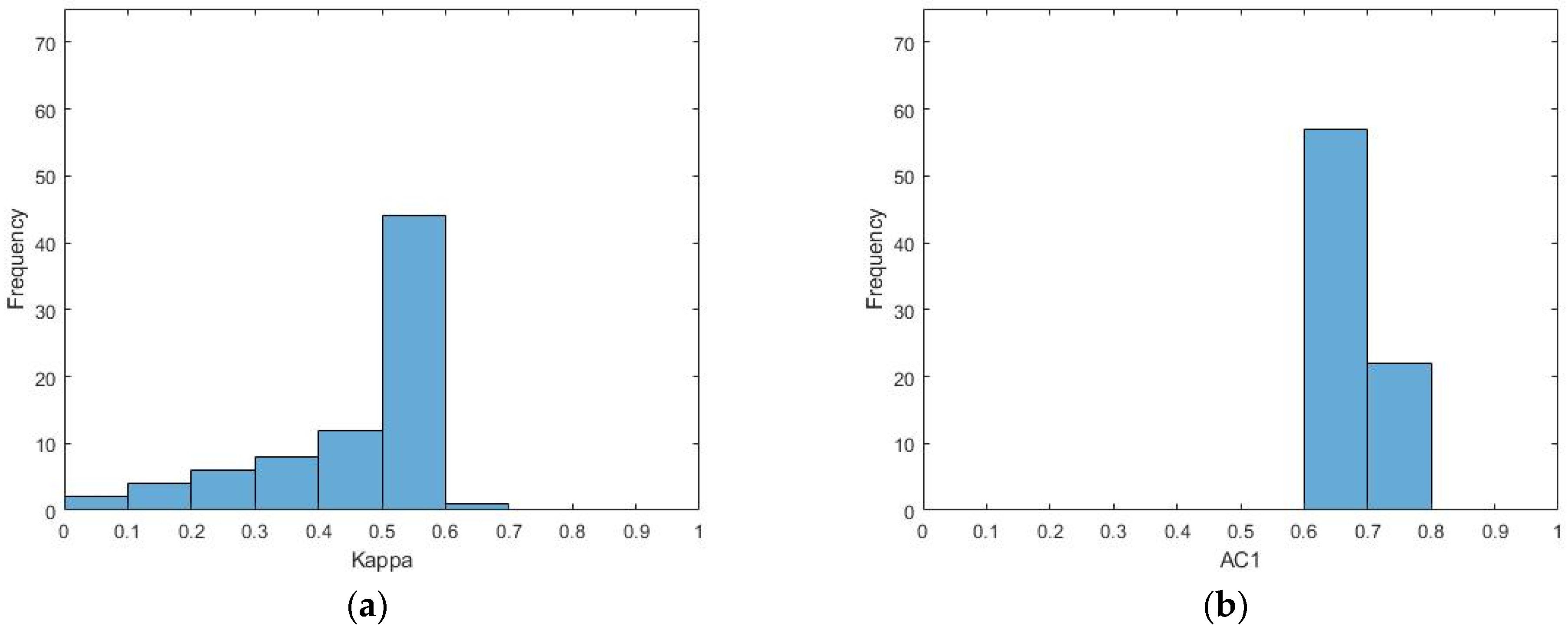

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters

![PDF] Large sample standard errors of kappa and weighted kappa. | Semantic Scholar PDF] Large sample standard errors of kappa and weighted kappa. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/f2c9636d43a08e20f5383dbf3b208bd35a9377b0/2-Table1-1.png)